Posts on this page:

- AD CS Partitioned CRLs - Introduction (part 1)

- Key Recovery Agent certificate management

- I'm moving, attempt #2

- Ubiquiti UDM Pro and IPv6 Router Advertisement issue

- I’m moving!

Hello all! This blog posts opens an "AD CS Partitioned CRLs - A Comprehensive Guide" blog post series. The first is an introduction.

All posts in this series:

- Part 1 - Introduction (current)

- Part 2 - Design Strategies

- Part 3 - Configuration Components

- Part 4 - Recipe guide

- Part 5 - Partitioned CRL API and Events

Starting with 2025 10B update (October 14, 2025), AD CS on Windows Server 2019 and newer will receive a new feature called Partitioned Certificate Revocation List (CRL), or Partitioned CRL. CRL partitioning is a process of splitting single CRL into a set of smaller CRLs. The following updates will enable this feature:

Let's recall the need of partitioned CRL and current state of the subject before we dig into new update.

Read more →

Hello everyone!

This a good time for a new blog post! Today I want to share some thoughts on Key Recovery Agent (KRA) certificate management.

What is KRA and Key Archival

Let's refresh what private key archival is in AD CS context. Key Archival is the process of securily storing subscribers' (clients) private key in CA database for backup purposes should client loose access to private key. Key archival is primarily used to implement a centralized long-term backup process for encryption keys (email, EFS, document encryption).

The whole idea may not be apparent from the first look, but here is a strong reason: encryption keys are used to decrypt documents/files/emails even after their expiration, so you may need encryption key after its expiration. Expired certificates are not normally backed up as part of regular backup process or stored in long-term backup set. If certificate is expired, we normally renew it and delete old one. And you will be stuck if such encryption certificates and their keys are lost. This is why Microsoft implemented a separate encryption certificate backup process and store them in CA database. CAs are long-living entities, can live for decades and survive multiple migrations. And it can be easily backed up with regular backup process, because it will store a complete history of CA DB content, including historical one.

While it may look insecure, storing private keys in database is never a good idea, right? And this is where Key Recovery Agent (KRA) comes to a play. All private keys stored in CA database are encrypted with one or more KRA certificates. And even if you steal CA database and dump it, client private keys will be stored in encrypted blobs and CA/attacker has no access to KRA keys to decrypt client keys. Here is a timeline diagram that shows key archival process:

Read more →

Six years ago I joined PKI Solutions company and as part of this process I wasn't allowed to blog about PKI/DEV stuff here. Today was my last official working day at PKI Solutions and I'm back here! I spent very interesting six years there, we did really incredible work "like no one ever seen before" ©Trump. We went just from some rough idea to a quite mature product: PKI Spotlight. I was responsible for architecture design, concepts and core/critical component development and for random really cool stuff. It was a very challenging journey, nothing came just as a straight line. Throughout the process, I learned quite a lot of new stuff, such as Azure, DevOps, containers, etc., it was a non-stop learning process. At PKI Solutions, I met some really good men, Ján Sokoly, Nick Sirikulbut, Mike Bruno, Jake Grandlienard and many others. But things are changing, the company is growing, people come and go, and it's a time for new opportunities and challenges.

As I mentioned in previous post, I brought my open-source projects (PSPKI and others) to company's GitHub account. In return, I've got a permission to work on them during my work hours (with some conditions, but anyways), which was very appealing. Throughout the work at PKI Solutions, I continued the support of these tools and we created some new open-source projects as part of company's commitment to community. As part of my resignation, I was given these tools back. I want to thank Mark B. "the PKI guy" Cooper (PKI Solutions president) who is a great man and released them without conditions. So, basically almost the same stuff is back:

- PowerShell PKI module

- pkix.net library

- ASN.1 Editor and ASN parser library

I didn't bring SSL Certificate Verifier, because it looks good enough in its current state and I have no particular plans on it. I will continue to support existing tools, though I need to do some work, such as updating documentation on my website, update internal tooling, Azure DevOps pipelines and so on. And, of course, will occassionally blog about some PKI/PowerShell/CryptoAPI stuff. So stay tuned!

Disclaimer: by no means I’m an IPv6 expert and I’m not going to teach on different IPv6 configuration options and basics. In this post, I will focus solely on a specific subject.

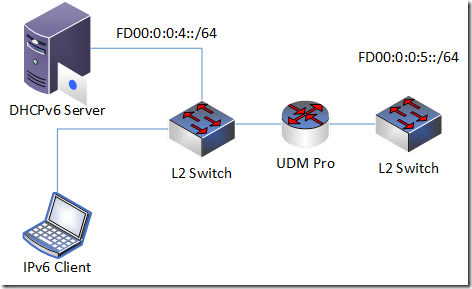

As a part of my IPv6 learning path I played with stateful DHCPv6 and spent all weekends to sort one interesting. Here is my simple network setup:

basically, two private networks connected to a router.

- UDM Pro router

- L2 switch

- DHCPv6 stateful server

- DHCPv6 client

- IPv6 network: fd00:0:0:4::/64

The problem: clients receive IPv6 address from DHCPv6 and cannot communicate in same network using LUA (fd00) addresses.

Read more →

Hello everyone!

Today I’m excited to announce that I’m changing my position and moving to a great team at PKI Solutions starting with July 1!

As you may know, I recently was graduated as bachelor in computer science and it is a great time to make another step forward. I wanted to progress in PKI area as even stronger specialist. Unfortunately, there is no PKI market here in Latvia (where I’m living) and had two options: become another regular software developer here in Latvia or find opportunities outside of my country. I was looking for a not very big team where I could develop myself and (it is very important) where the team can benefit from my knowledge and experience. While looking for job opportunities I realised that I’m not fitting good many positions, because either, overqualified or underqualified for particular position.

I heard about Mark “The PKI Guy” Cooper from his years at Microsoft and knew him as a world-class PKI specialist. Mark is a president of PKI Solutions, they offer PKI consulting services and run PKI training. I didn’t see myself there, because PKI Solutions is US-based and relocation to US isn’t an option for me, but pinged him anyway, maybe he could have options in EU. Otherwise, PKI Solutions is a perfect place where both parties can benefit: I can continue my self-development in the area and the company gets a strong knowledge and experience in programming with PKI. Surprisingly, Mark showed a high interest in my work and heritage and made an offer which doesn’t require relocation.

During the negotiation of the deal, Mark showed himself as an awesome man with a clear vision of his business’ needs and how I can fill certain gaps to make the PKI Solutions a solid all-around team where each piece consists of strong specialists in particular areas. In addition, Mark expressed a wish to continue the support of all my public work: blogging, technical forums and open-source projects. These days community is vital for IT market, you have to support the community and you will get paid back eventually. And I will play an integral role in making the PKI Solutions a more community-oriented company though knowledge sharing.

Along the personal move I’m moving my public projects to PKI Solutions as well, because we will build new tools on top of existing frameworks. These tools are moved:

I will continue these tools development as open-source projects. Nothing will change to existing users, it is only brand change.

As a PKI Solutions employee I will continue blogging about PKI and CryptoAPI at https://www.pkisolutions.com/author/crypt32/. I will continue to maintain this blog in future so no existing link will break, but all new PKI-related posts will go to new blog.