Update 06.04.2020: clarified the last section by mentioning that everything expressed in this blog post is primary opinion-based.

Update 16.06.2022: updated recommendations on HTTP URL host name.

Update 20.11.2025: updated LDAP support in Partitioned CRL feature

Information in this article should be used only when you plan ADCS installation.

Hello Internet!

Today I’m going to describe one of the ugliest and, on the other hand, one of the most important topic in PKI — chain building and revocation checking and how to design/plan them by conforming best practices.

Motivation

Certificate chaining engine and revocation checking are PKI fundamentals and your PKI success depends on how well you understand this subject and how well it is implemented in your environment. When it is implemented incorrectly, then you are a candidate for another topic starter on TechNet forums, because certain applications stop working as expected: you cannot log on to domain by using smart card, you cannot connect to remote site over VPN, OpsMgr/SCCM cannot contact managed clients and so on. In worst cases you cannot start your CA server. Actually, revocation checking topic is the hottest topic on TechNet Security forum and, apparently, it is hotter than Tera Patrick herself.

Before you start

A time ago I posted an article that describes certificate chaining engine fundamentals: Certificate Chaining Engine (CCE). I strongly recommend to read it to get a better understanding about how it works. The rest post will be based on this article.

Getting started

If you are familiar with how CCE works, we can identify tasks we need to solve:

- Determine the URL type (http or LDAP) and scope (internal or internal + external);

- Provide a target URL;

- Configure CA server.

URL type and scope

At first we need to determine what kind of URL and how many URLs we need? By default, Windows CA use two URLs that use different access protocols (both for CDP and AIA extensions): LDAP and HTTP. For example, default URLs for default CA installations are:

- CRL Distribution Points (CDP):

[1]CRL Distribution Point

Distribution Point Name:

Full Name:

URL=ldap:///CN=Contoso%20CA,CN=DC1,CN=CDP,CN=Public%20Key%20Services,CN=Services,CN=Configuration,

DC=contoso,DC=com?certificateRevocationList?base?objectClass=cRLDistributionPoint

URL=http://dc1.contoso.com/CertEnroll/Contoso%20CA.crl

- Authority Information Access (AIA):

[1]Authority Info Access

Access Method=Certification Authority Issuer (1.3.6.1.5.5.7.48.2)

Alternative Name:

URL=ldap:///CN=Contoso%20CA,CN=AIA,CN=Public%20Key%20Services,CN=Services,CN=Configuration,

DC=contoso,DC=com?cACertificate?base?objectClass=certificationAuthority

[2]Authority Info Access

Access Method=Certification Authority Issuer (1.3.6.1.5.5.7.48.2)

Alternative Name:

URL=http://dc1.contoso.com/CertEnroll/DC1.contoso.com_Contoso%20CA.crt

URL order is important. CryptoAPI client always attempts the first URL in the list to retrieve the file. Second URL will be checked only when first URL times-out (15 seconds time-out). Therefore, to avoid possible revocation checking delays the most accessible URL must be placed first!

While 10 years ago it was enough for most installations, it is not very practical nowadays. LDAP protocol mainly can be accessed only by Active Directory forest members. If you have clients outside of your domain or when users cannot reach domain controller (when employee attempts to establish a VPN to corporate network from internet), then LDAP URL is not their best option and you should use HTTP protocol as it is supported by any kind of clients (even non-Windows).

Another benefit when using HTTP transport is a support for E-tag and Max-Age HTTP headers, which are not available for LDAP. E-tag header greatly improves revocation checking experience, especially for long-living CRLs (several months). Offline root CAs usually issue CRLs which are valid for 6-12 months and these CRLs are usually cached on clients until CRL expires. If subordinate CA is revoked, client's won't be notified about revocation for a long time, because they use cached CRL which is still valid. With E-tag clients can periodically query CRL distribution point for CRL update (by passing E-tag value in the request). If HTTP server responds with the same E-tag value, then CRL is unchanged and client still can use cached CRL. If E-tag in the response doesn't match the one in the request, then CRL was updated on a server and client initiates CRL download and local cache update even though cached CRL is still valid. E-tag check period is determined by Max-Age header. Default value for IIS web server is 1 week. When using LDAP, these features are not available and clients will still sit uninformed about revocation until cached CRL expires. More details on CryptoAPI support for HTTP headers: Using ETags and Max-age in a Request.

With 2025 September (9B) update, CAs based on Windows Server 2019, 2022, 2025 and newer received a new feature called Partitioned CRL. This new feature explicitly drops support for LDAP URLs and CA will fail to start if Partitioned CRL is enabled and at least one LDAP URL is found. Read the following blog post that describes this limitation: AD CS Partitioned CRLs - Configuration Components (part 3).

In the URL samples I displayed that two URLs are used for each extension (CDP and AIA) for redundancy. Say, if client is inside the domain network, it uses first URL. When it is outside of the domain network, the first URL obviously fails and CryptoAPI checks second URL. In this case clients outside of your domain network will experience long delays during revocation checking (15-20 seconds) for each URL. Entire chain building and revocation checking may take about 1 minute or so.

As a general practice, it is recommended to not use LDAP URLs at all and use only HTTP URLs, even if your clients are exclusively internal domain members).

file://protocol is no longer supported for file retrieval (when published in the extension).

This design simplifies URL management and guarantees that all clients will be able to download the file. It is ok to have a single URL in the CDP and AIA extensions. Of course, you should (at least, it is recommended) to provide sort of high availability by using external means. For example, publish CRL/CRT files on NLB cluster. Also, CA server SHOULD NOT act as a web server to serve CRL/CRT files. There should be a separate web server.

OCSP

OCSP (Online Certificate Status Protocol) — is a lightweight revocation checking protocol which runs over HTTP transport. The idea is that instead of downloading whole (probably large) CRL file for single certificate checking, it is reasonable to query web service for particular certificate revocation status. While CRL download size is variable and depends on a number of revoked certificates, OCSP request/response transaction size is constant value ~2.5kb. OCSP caching mechanism is the same as with CRL. Each OCSP response is cached for a period time specified in the OCSP response.

Another good feature in OCSP is OCSP Stapling. Certificate owners (for example, IIS web server) can get signed OCSP response for its own certificate and pass this OCSP request to web clients along with TLS certificate during TLS handshake negotiation. Client is no longer required to query OCSP or download CRL to verify TLS certificate revocation status. Client safely uses stapled OCSP response sent by server. Since OCSP response is digitally signed by OCSP server, there is no way for certificate owner (web server) to tamper or otherwise manipulate the OCSP response.

There is, though, one downside with OCSP: bulk client certificate validation. For example, smart card authentication, 802.1X wireless authencation certificates. With OCSP, RADIUS servers (or domain controllers) will be forced to query the same OCSP server when validating each client certificate. As the result, OCSP traffic may exceed CRL size and it would be reasonable to get single CRL, cache it, and use for every client certificate validation. Microsoft CryptoAPI handles such cases as follows: after sending 50 OCSP requests to the same OCSP responder and same issuer, CryptoAPI client stops OCSP query and downloads CRL if possible (by using URLs specified in the CDP extension of client certificates). More on this behavior: Determining Preference between OCSP and CRLs.

Another point to consider is the use of OCSP servers for each CA type. OCSP is recommended for CAs that issue certificates to end clients (users, computers, services) and where revocation intensity is relatively high. OCSP doesn't bring any benefit to CAs that issue certificates only to other CAs. For example, root CAs usually issue certificates only to intermediate CAs only. In this case, revocation is extremely rare and CRL size will be less than OCSP request/transaction traffic. Typical CRL size with no revocation records is ~500-800 bytes only. As a general practice, consider to use OCSP only for issuing CAs only.

URL form

In the previous paragraph we identified that we will need a single URL for each, CDP and AIA extensions and URL will use HTTP scheme.

NEVER use HTTPS protocol for CRT/CRL file retrieval, because CryptoAPI will permanently fail to fetch this URL.

Now, we need to design a URL form. This means that we need to design the name for CRT/CRL file, virtual directory (if necessary), separate host (if necessary). There is no significant recommendations, except that file name should be simple and do not contains spaces and/or special characters. Here are several examples of good URLs for “Contoso CA” root CA server:

- http://cdp.contoso.com/CertData/contoso-RCA.crt

http://cdp.contoso.com/CertData/contoso-RCA.crl - http://pki.contoso.com/crl/contoso-RCA.crl

http://pki.contoso.com/cert/contoso-RCA.crt

First example illustrates when both CRL and CRT files are stored in the same virtual directory, while the second example illustrates a separate virtual directories for each file type (certificate and CRL).

Note: it is advised to use dedicated host name prefix and do not re-use existing host names (occupied by other web applications) when choosing hostname part of URL. CAs are long-living objects (can live several decades) and URLs are hardcoded in long-living certificates. You will have to maintain the URL for CA lifetime duration. And if you will encounter into problems when decide to move existing web app to another host name header. By using dedicated host name you easily maintain dedicated host name via DNS CNAME records when physical hosting changes.

Configure CA server

We are almost done. Now we need to configure CA and web servers. The following step-by-step scheme will be used:

- CA server generates CRLs and CRT files;

- CA server publishes these files to a remote share (share on web server or DFS share, doesn’t matter which one);

- Web server picks these files from local or remote share;

- Web server transfers these files when requested.

Configuring permissions

Step 2 requires additional permission configuration. If CA and remote share are located in the same domain, then you need to configure permissions on remote share as follows:

- Share permissions: grant Allow Full Control to “Cert Publishers” predefined domain-local group;

- NTFS permissions: grant Allow Full Control to “Cert Publishers” predefined domain-local group.

if remote share is located in different domain:

- in target (where resides remote share) domain add CA server computer accounts (all CAs) to a respective domain “Cert Publishers” predefined domain-local group.

- Configure permissions on the share as follows:

- Share permissions: grant Allow Full Control to “Cert Publishers” predefined domain-local group;

- NTFS permissions: grant Allow Full Control to “Cert Publishers” predefined domain-local group.

These steps are necessary to allow CA service (which runs under Local System account) to publish files to remote share.

Configuring CRL Distribution Points extension

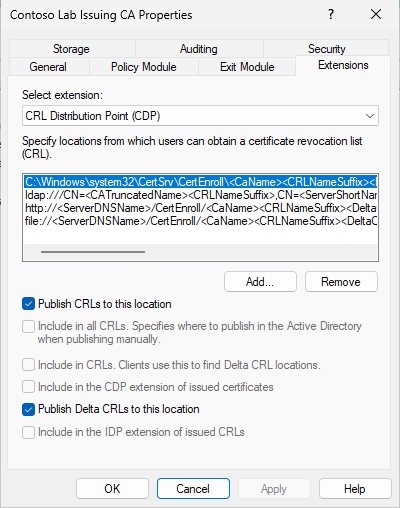

Now, we can configure CDP and AIA extensions on CA server. To do this, open Certification Authority MMC snap-in. In the opened snap-in, select CA node, right-click and switch to Extensions tab:

In the picture you see default CDP locations. Just remove ldap, http and file locations. We will configure CA to use the following URL in issued certificates: http://cdp.contoso-lab.lv/CertData/ica-g1.crl. You will need to remove all entries, except first one. You should not remove it, because it is internally used by Certification Authority MMC snap-in. After that add the following entries:

- \\ServerName\RemoteShare\ica-g1<CRLNameSuffix><DeltaCRLAllowed>.crl. Enable “Publish CRLs to this location” and, optionally, “Publish Delta CRLs to this location” (if you plan to use Delta CRLs) checkboxes.

- http://cdp.contoso.com/CertData/ica-g1<CRLNameSuffix><DeltaCRLAllowed>.crl. Enable “Include in CDP extension of issued certificates” and, optionally, “Include in CRLs. Clients use this to find Delta CRL locations” (if you plan to use Delta CRLs) checkboxes.

First entry specifies the location to which actual files should be published. Replace “ServerName” and “RemoteShare” with actual values. Also, you will need to change CRL file name. The only part that remains unchanged is: <CRLNameSuffix><DeltaCRLAllowed>. These special variables are necessary to support multiple CA certificates and Delta CRLs. For more details read the following article: Root CA certificate renewal.

Second URL will be published in issued certificates. CRL file name and special variables MUST match in both URLs.

Configuring Authority Information Access extension

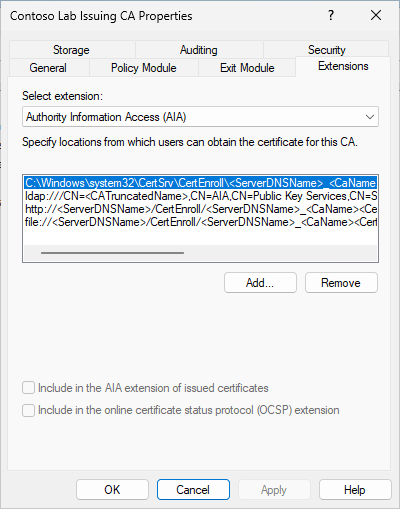

When finished, apply changes and switch to Authority Information Access (AIA) extension. Here are default AIA URLs:

Again, we see 4 URLs. You should leave first location (which points to local file system) and remove the rest locations. We will configure CA to use the following URL in issued certificates: http://cdp.contoso.com/CertData/ec-rca-g1.crt. Unfortunately, Windows CA do not support custom CA certificate publication locations, you will have to rename and copy the file to remote share manually. Therefore you will need to add only one location:

- http://cdp.contoso.com/pki/ica-g1<CertificateName>.crt. Enable “Include in the AIA extension of issued certificates” checkbox. You plan to use OCSP, then add another location that points to a OCSP server and enable only “Include in the online certificate status protocol (OCSP) extension” checkbox.

The purpose of the special <CertificateName> variable is described in the Root CA certificate renewal article. Apply changes and restart CA service when prompted.

Verify settings

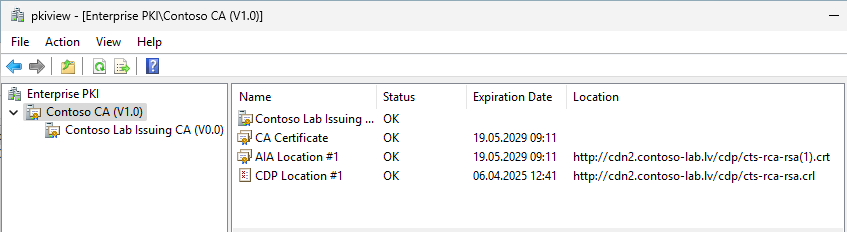

If you feel that everything is configured properly, then you should verify new configuration To verify it, run pkiview.msc MMC snap-in. This console allows you to check CDP/AIA URL availability and whether the published files are correct. Here are 2 screenshots from my test lab (2-tier hierarchy):

This screenshot from root CA. As you see, it’s version is V1.0 which indicates that CA certificate was renewed once with the same key pair. And you see <CertificateName> variable in action: it adds a certificate index in parentheses — (1).

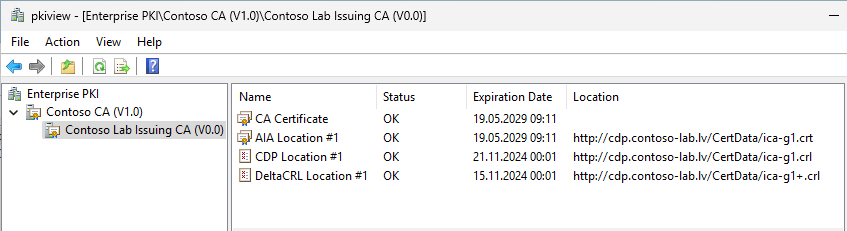

And here is another screenshot from subordinate CA:

Root CA version is V1.2 which indicates that CA certificate was renewed once and last time it was renewed with existing key pair. In this example you see the following variables in action: <CertificateName> variable in CRT file and <CRLNameSuffix><DeltaCRLAllowed>. <CRLNameSuffix> adds key index in parentheses (2) and <DeltaCRLAllowed> adds a plus “+” sign at the end of file to indicate that the file is Delta CRL. If all locations report “Ok” status, then the configuration is ok, otherwise something went wrong and you should fix it *before* you start use CA server.

If something went wrong and you fixed it, you may need to revoke the latest certificate based on “CA Exchange” template and re-run the console. Pkiview.msc relies on CA Exchange certificate to collect configured CRL/CRT URLs.

Everything in this article is based on my own opinion, experience in PKI field, best practices (as I see them) which may or may not conform Microsoft recommendations. If you want to dig deeper, then you should check the following whitepapers:

- Certificate Status and Revocation Checking

- Certificate Revocation Checking in Windows Vista and Windows Server 2008

Hey Vadims, Great article and exactly how I design these ADCS elements for a modern PKI deployment! It encapsulates the key design features really well - thanks! Cheers JJ

Thanks! It is one of the less described topic about PKI fundamentals in the internet. Hope to see less questions about "Revocation server offline" issues :)

Best article I have found. Just the topic I was looking for! Thank you and keep on going! :)

Read many blogs and articles about this stuff, but this one is by far the best. All those silly URL's with spaces, which are used by other people, it in't necessary at all! Still doubting if I shouldn't add the LDAP path anyway. Most of our clients are member of the AD.

> Most of our clients are member of the AD. Eventually we decided to completely move from LDAP regardless of whether certificate clients are domain members or not.

The more I read, the more it appears that end clients are even more sensitive to CRL distribution point failure than they are to CA failure. Yet no-one seems to have published a best practices regarding highly available CRL / AIA distribution points (or HA OCSP, or HA SCEP, or HA web enrollment). If you place the CRL distro point on a web farm using NLB (that is separate from the CA servers), do you create and set up scheduled scripts to regularly copy the CRL and CRT files to each node's storage, since storage is not typically shared in an NLB situation? If I have a separate PKI infrastructure for ECDSA vs RSA, can I share the same website URL for both CRL distro points, or is it better to have separate ones? Going beyond just CRL point (and beyond the original scope of this article), what about Web Enrollment services, can those be put on an NLB webfarm? Can that one webfarm do web enrollment for both RSA and ECDSA?

Answered the web enrollment part of my own question: https://blogs.technet.microsoft.com/askds/2009/04/22/how-to-configure-the-windows-server-2008-ca-web-enrollment-proxy/

Great article !

I wish I found this a while ago. It answers several "But why..." questions that I wanted confirmation of.

Kudos.

Hello,

Thanks, great article :)

I don't understand why ldap url is removed from AIA and CDP's locations. If a client is outside the organisation (as you say in a vpn access case) or non-domain joined, i understand why HTTP location is necessary. But for domain-joined client / internal client, is LDAP URL not better ?

> But for domain-joined client / internal client, is LDAP URL not better ?

not better. At first, you will have to use separate CAs for internal and external clients. It can cost. Another point is that LDAP doesn't support improved revocation experience via caching control and E-tag HTTP headers. Really, there is no real need to bother with LDAP at all.

Very confused on this-

"Also, you will need to change CRL file name."

1. Why? Can't this be created correctly in initial configuration?

2. Where do you change this?

I'm assuming the C:\Windows\System32\CertSrv\CertEnroll

If you change it elswhere and it's a different name then what's stored on the server, isn't that a security issue?

> 1. Why? Can't this be created correctly in initial configuration?

no, you cannot configure CDP/AIA during installation, you have to change it after CA installation.

> If you change it elswhere and it's a different name then what's stored on the server, isn't that a security issue?

no, it is not a security issue. Because if you get access to this file on the server, it doesn't reveal anything useful to attackers.

Just a quick question. I am new to this and have been following articles to have this set up.we have CBA configured for o365 access control and there is a requirement to have the CRL and AIA accessible over the internet... We have the CRl and AIA published over the internet. Although the CRl does not contain any private information, the AIA contains the certs from the CAs, is this a security risk of any sort?

> Although the CRl does not contain any private information, the AIA contains the certs from the CAs, is this a security risk of any sort?

No, there are no risks. Public certificates are meant to be public.

Hi,

Thank you for the article. I have a quick question that is really bothering me. If I want to configure a highly available HTTP CRL DP, I configured two Web (IIS) servers as required and then I can use DNS Round Robin or NLB between them. The thing that I cannot get my head around is:

On Server1, the HTTP CDP could look something like: http://www.contoso.com/pki/contoso-RCA1.crl

On Server2, the HTTP CDP could look something like: http://www.contoso.com/pki/contoso-RCA2.crl

If I add the two HTTP URLs to the CDPs of Server1 and Server2, would that work? Because I understand that clients only check the first entry of each type i.e. the first LDAP and then the first HTTP. Hence, it will ignore the second one, isn't that right?

If I use DNS RoundRobin, for example, having two "www" A records, one pointing at Server1 and one at Server2. In that case, which URL will I add to the CDP list? The first one or the second one? If I add the first one but the CRL file name on the second one, contoso-RCA2.crl, is different then that would give an error if a user hits the second server.

I am missing something quite trivial probably, right?

Would really appreciate the help.

Best regards!

> On Server1, the HTTP CDP could look something like: http://www.contoso.com/pki/contoso-RCA1.crl

> On Server2, the HTTP CDP could look something like: http://www.contoso.com/pki/contoso-RCA2.crl>

It is not quite correct. When we talk about HA, the URL to a resource is unified. Client has only single URL and depending on DNS/Load balancer it is pointed to the right server. That is, in a given case you put only one CDP URL: http://www.contoso.com/pki/contoso-RCA1.crl and this exact file (contoso-rca1.crl) file must be available on each web server. It is out-of-band process how you deliver the file there, make a copy on each server, or have DFS share (and both servers point to that share), or another solution.

Thank you for that. Probably, I should have mentioned that we have 2 subordinate CAs so that's why each one of them generates a different CRL. How do manage that? If CA1 issues a certificate with CRL1 as the CDP, hence the HTTP CDP looking like this: htttp://www.contoso.com/pki/contoso-CA1.crl

But then if we have DNS Round Robin, 2 x www records, each pointing at one of the CDP and if DNS redirects the user checking for revocation to the second path which doesn't have the contoso-CA1.crl published, that woudl cause an issue, wouldn't it?

In other words, should we add the two different HTTP CDPs to each Sub CA?

Would really appreciate your help.

> In other words, should we add the two different HTTP CDPs to each Sub CA?

nope, again. If you have two CAs, then, first CA (say, SubCA1) includes the following URL in CDP: http://www.contoso.com/pki/contoso-subca1.crl

You configure both web servers to serve "contoso-subca1.crl" file. Second CA (say, SubCA2) includes the following URL in CDP: http://www.contoso.com/pki/contoso-subca2.crl and configure web servers to serve that file too. Eventually, physical folder (mounted as pki virtual directory in IIS) will serve two CRL files from both CAs and both web servers will have identical configuration in respect to PKI web site. It really doesn't matter how many CAs you have as long as they share the same web site configuration to serve clients.

Great, thank you so much. Much appreciated!

Vadims:

Great Article!! I am wondering whether you can have both URL for the file based CRL and another for OCSP. I need to migrate to OCSP. I know that the modification needs to take place in the extensions portion of the CA.

Thanks

> I am wondering whether you can have both URL for the file based CRL and another for OCSP

why not? Though, note that OCSP URLs are added in Authority Information Access extension, not in CDP. Also, note that OCSP is not a replacement for CRL, you will have to provide both, CRL and OCSP revocation providers in certificate.

Hello Vadims, great article. How do you get pkiview.msc to run on the standalone root CA?

'An Enterprise CA cannot be located. Verify that an Enterprise CA exists in your forest and is listed in the Enrollment Services container on your domain controller.'

Br, Tim

You can't run pkiview.msc in non-domain envrionments. At least one Enterprise CA must be installed. pkiview.msc automatically builds PKI hierarchy based on certificate chains.

Great article, I appreciate the work that went into this.

I'm planning on using a webserver in a DMZ, non domain joined. My question: Is there a way to setup permission on the DMZ webserver to allow publishing to the AIA/CDP points from the domain joined Enterprise Sub CA? Or, am I going to have to publish them manually?

Again, thank you.

> Or, am I going to have to publish them manually?

yes, you will have to publish them manually. CA service runs under local system account and workgroup computers cannot authenticate themselves on other computers. However, there is a workaround: write a simple script that will run on CA server (or other computer that has access to CA source certificates and CRLs) and will copy them to web server in workgroup. Then assign it to a task scheduler where you can configure user account to use on web server. You will have to create a local user that will match (user name and password) with the one on web server.

>While 10 years ago it was enough for most installations, it is not very practical nowadays. LDAP protocol mainly can be accessed only by Active Directory forest members. If you have clients outside of your domain

>or when users cannot reach domain controller (when an employee attempts to establish a VPN to corporate network from internet), then LDAP URL is not their best option and you should use HTTP protocol as it is

>supported by any kind of clients (even non-Windows)

That claim is utter bullshit, LDAP is an Open Souce Network Application Protocol it was first developed on Linux so non-Windows, it is also widely used by millions of devices every day that are not in a domain forest for example ((Cisco, Zyxel, Draytek) Switch, Routers, Firewalls), Software Applications. a VPN should have access to network resources and that includes being able to talk to servers on that network so you would just connect to it.

> LDAP is an Open Souce Network Application Protocol it was first developed on Linux so non-Windows

This doesn't matter much where and when LDAP was developed. I'm talking about current practices. On Windows, only AD LDAP implementation is widely used. Other LDAP server implementations (most are on network devices) depend from organization to organization. Therefore my recommendation is still the same: don't waste efforts on building revocation server using LDAP, spend it to affordable and globally usable HTTP server.

Hi Vadim,

I'm setting up a new PKI on 2016 windows servers. My server is doing the Issuing and the Web enrollment

I try to publish my CDP CRL List but I always get a deny when I do the "certutil -CRL"

This is due to the last entry in this command :

certutil -setreg CA\CRLPublicationURLs "65:C:\Windows\system32\CertSrv\CertEnroll\%3%8%9.crl\n79:ldap:///CN=%7%8,CN=%2,CN=CDP,CN=Public Key Services,CN=Services,%6%10\n6:http://pki.clstjean.be/CertEnroll/%3%8%9.crl\n65:\\<Server UNC>\CertEnroll\%3%8%9.crl"

I'm able to write in this directory with the account I use to perform the command to publish

If I remove the entry, then the "certutil -CRL" works perfectly

Do you have an idea ?

Are UNC not supported anymore ?

So i

I found the problem but I still don't understand

My Share "CertEnroll" is on "c:\certenroll"

If on share permissions, I allow everyone with change rtights it works

I noticed the "certutil -crl" use the computer account to write in this folder and the computer account is in the "Cert Publisher" ad group which has change permission on the share and modify rights on the security of the folder

I also tried to put directly the computer account on the share and in the security but it does not work

Only allowing Change for Everyone on the share permission solve the issue

strange, isn't it ?

Julien Baldini,

I never faced such issue. For share permissions you should consider to create a domain-local group and add Cert Publishers group there. Use domain-local group to assign share and NTFS permissions. In other words, use AGLP pattern.

Hi Vadims,

>>You configure both web servers to serve "contoso-subca1.crl" file.

How is this done ?

> How is this done ?

by putting the file to both web servers, or put the file on a common share accessible by both web servers.

Considering non-domain environments... When AIA is not configured, thus not avialable in certificates, then Root and Sub CA must be somehow available for CCE. The Root Cert should be installed in the Trusted Root Certification Authorities Certificate Store. But how about Subordinate CA/Intermediate CA certificate? I understand it must not be explicitly trusted, but must be available to CryptoAPI for CCE. Where to put it?

Thank you the excellent article AND the followup Q&A! Much appreciated!

> Thank you the excellent article AND the followup Q&A! Much appreciated!

No problem!

Hi Vadims,

Can a CA have multiple HTTP URLs? If yes, do all these HTTP URLs point to the same CRL file or each URL has it's own CRL file?

> If yes, do all these HTTP URLs point to the same CRL file or each URL has it's own CRL file?

Yes, you can have multiple HTTP URLs. They should point to their own copy of same CRL file.

Hi Vadims, do you have any experience in delays which occur when (delta) CRLs are published to two file locations (e.g. \\server1\... and \\server2\..) and one location is not available?

We find that the CRL is created in time, but not written to any location (LDAP, file, not even c:\system32\certenroll) until the attempt to the one file location times out (10 minutes, seems hardcoded).

This is because we have 2 OCSP responders behind an NLB and we didn't want to have any scheduled task or so to copy one file around (timing issues). Now we found this behaviour when one server went offline.

So publishing to a highly available storage location (e.g. netapp filer cluster) with a DFS share seems the only solution (we don't want to bother with Windows Server cluster and cluster shared volumes). Or do you see any other way to overcome such single failure of a file:// CDP location?

What you are experiencing is expected behavior. CA publishes files one by one and if URL is failing, next will be attempted only after first URL is completed (either, published or failed).

Actually, DFS is the correct solution since it was intended to perform this task -> abstract clients from actual storage. What is behing DFS is completely irrelevant for clients.

Hi Vadims,

I have a 2 tiers architecture PKI with several Sub CAs, one per forest/domain (same thing in my case).

Each forest will expose it's own URL web server for CDP/AIA/OCSP.

On the Root CA, do I have to add CDP/AIA extension in each Sub CA ? If yes, I have to modify the http address each time I generate a new forest Sub CA, correct ?

> On the Root CA, do I have to add CDP/AIA extension in each Sub CA ?

no. Root CA should include only single HTTP URL which is accessible by all clients. Trying to include root CA CDP/AIA URLs for each domain is a non-working design, because URLs are read in exact order as they listed. 3rd and subsequent URLs won't be reached, because chaining engine will report timeout after attempting 2nd URL. So even don't try this approach. When CRLs/Certs are used from internet (say, WFH scenario when employees connect to corporate resources from home), then my recommendation is to use sort of CDN, which is highly available and scalable. All mahor cloud providers (Azure, AWS, GCS) offer CDN service.

On the root CA I was thinking about having only 1 URL at a time :

- Defining http://domain1/ for CDP/AIA then signing the sub ca for forest1/domain1

- Replacing the http://domain1/ by http://domain2 for CDP/AIA then signing the sub ca for forest2/domain2 and so on.

- Switch off the root

In this way each "sub ca verifications" will point to the proper url in the correct forest

The point is that I don't have a global http server to retrieve CDP/AIA, I have URLs per forest.

If it's a solution, I don't really like it, bit I don't see another solution.

As long as you can follow this strategy, then it is acceptable to some degree. And keep in mind that you will have to use this strategy during subordinate CA renewals and copy root cert and CRLs to all locations.

Yes that's why I don't like this solution, it's so easy to define the wrong URL generatiing/renewing the sub ca.

it's not possible to avoid the AIA/CDP definitions in sub ca certificates ?

> it's not possible to avoid the AIA/CDP definitions in sub ca certificates ?

no, it's not possible. So then you should consider to find a globally (within requirements) accessible HTTP endpoint for root CA.

Additionnaly to sub ca AIA/CDP, Root CA AIA/CDP will be copied on each domain (1 per forest) :

- On each C:\Windows\System32\certsrv\CertEnroll\ (sounds strange for me about the root CA AIA)

- On each web serveur (AIA+CDP)

Hi Vadims,

My SubCA uses "based on an RSA certificate" generates both RSA (windows) and ECDSA certificates (specific devices). It generates a CRL/delta CRL using signing/hash algorithm SHA384RSA/SHA384.

My problem is that the specific devices do not accept the CRL and I don't know why, my hypothesis concerns the algorithm.

Is it possible to generate an additionnal CRLs (a delta CRL is not useful in this case) with ECDSA signing/hash algorithm for these specific devices ?

1) Not necessary, it's supposed to work

2) No I have to create a specific subCA

> my hypothesis concerns the algorithm

this is not correct. CRL signature algorithm doesn't relate to algorithm used in issued certificate. Algorithm for issued certificate is chosen by client and algorithm used to sign CRL depend on CA. Your configuration is supposed to work and you have to debug it on client. For example, using "certutil -verify -urlfetch" command.

> is it possible to generate an additionnal CRLs (a delta CRL is not useful in this case) with ECDSA signing/hash algorithm

it is not possible. CA must have ECC key in order to create ECDSA signatures.

Hello, I am deploying a single offline root ca and two issuing both hosting all roles (CDP, AIA, enrollment, OCSP) CA's, site-ca1 and site-ca2. Can I add DFSr to both servers and host the CRL and AIA http url's on a replicated folder and then add both CRL and AIA urls on as both locations? i.e. (is DFS even needed if both url's are set and post CRLs?)

CDP line1: http://site-ca1/site-ca1.crl

CDP line2:http://site-ca2/site-ca2.crl

and the same for AIA on both servers?

Great Post :)

When it comes to E-Tags and setting the time intervals for an IIS WEB Server acting as the endpoint for CRL HTTP

I am looking at how to configure/change the values and I think the following post explains where the setting resides and how to change it (should you need to. However, I am not sure if this is the correct place/setting as not 100% clear to me.

Can you please confirm if the above link shows the correct place in IIS to change the behaviour of the e-tag and max-age

Thank you Vadims

> Can you please confirm if the above link shows the correct place in IIS to change the behaviour of the e-tag and max-age

yes. E-Tag and Max-Age headers are maintained by IIS, thus any changes should be done on IIS. However you should understand the implications of changing default values and change only if there is a justification for changes. In vast majority of cases default values are sufficient.

Hello Vadims

Further to my last quick comment, I forgot to also ask as part of that comment the following

Does the Windows OS (windows 10 and windows server 2019) check/utilize the e-tag and max-age in different scenarios for example

A windows 10 computer client checking the revocation status of a code signing certificate, or client authentication, or server authentication certificate etc.

In other words, if I was connecting to a WEB site I would likely be using a WEB Browser on Windows 10 and receive the server authentication certificate from the WEB server (then validate certificate). When checking signed code/application locally I would look at the trusted publisher's store, check CRL etc, so different ways of consuming/using certificate presented.

Is the e-tag, max-age relevant/checked (all else being equal) for these different use cases, as it is the job of the revocation checking code/engine (for want of a better word); which is responsible for utilizing the e-tag/max-age when using an HTTP endpoint for CRL checking (like IIS 8.5 or above) and therefore not relevant to the application at the higher level being used?

Thank you Vadims

> so different ways of consuming/using certificate presented.

it doesn't really matter, because it is CryptoAPI client which performs revocation cache maintenance and it doesn't make any distinction on how and where the certificate is consumed.

> Is the e-tag, max-age relevant/checked (all else being equal) for these different use cases

in fact, these operations (cache maintenance and certificate validation), because E-Tag/Max-Age check and processing is a separate isolated task that is executed on an internal timer. It doesn't depend on application activity.

Hi,

I have a quick question, thanks

Does the CCE on Windows consider the Proxy settings on the Windows Computer.

Example, As CRL and OCSP use port 80 which is also the port for general internet WEB browsing. If a Group Policy is pushed down to my corporate laptop which states use Proxy1.Company.com for the WEB browser.

As CRL / OCSP also port 80, dose the CCE take any notice of the Proxy settings on Windows ?

Or is there a seperate registry key which if set forces the CCE to go via a specific proxy when reaching out for a CRL or OCSP responder ?

or does it just try use standard TCP/IP routing and ignore such proxy settings

Thanks

Maria

> Does the CCE on Windows consider the Proxy settings on the Windows Computer.

yes, CCE respects proxy settings.

Thank you very much for answering my question

Maria

Does cryptoAPI still not support HTTPS even on modern os systems like Windows 10/11 or server 2019 etc?

Thanks

> Does cryptoAPI still not support HTTPS even on modern os systems like Windows 10/11 or server 2019 etc?

it does not and unlikely will do. Can you tell why it should support? You should understand that HTTPS for CDP/AIA provide no security and add extra costs to certificate validation. Therefore I don't see reasons why anyone would want HTTPS here.

Vadims,

First, great article! Thanks for publishing it and responding to many comments and questions. I am little confused about E-Tag and Max-age headers for CRL HTTP locations and would appreciate if you could clear my confusion.

I'm looking to host the CRLs for the offline Root CA and couple Issuing CAs by the same web farm accessible by a single URL (http:/pki.abc.com/pki). You mentioned that max-age header out of the box is set to 1 week and this in most cases should not be changed. When I look at the default IIS 10 settings, I see the cacheControlMaxAge attribute set to 1.00:00:00 (1 day) and cacheControlMode set to NoControl. SetEtag attribute is set to True. Keeping in mind that our offline root CA will published new CRL every 6 months and all Issuing CAs every 7 days, would you recommend changing cacheControlMaxAge to 7.00:0:00 (1 week) and cacheControlMode to Max-Age? In my opinion, this would work great for the offline root CA as it would force the clients to pull new CRL (if changed) every week. For the Issuing CA CRLs on the other hand, if we were to publish new CRL out of band, the clients would not get the new CRL until the 7 days dicated by the max-age header expire. Is that true statement?

Would it make sense to have one path /pki for issuing CAs CRLs and let's say /rootpki for the offline Root CA CRL? That way you could have different max-age settings for root CA and Issuing CAs.

Thanks again for all you do!

Patrick

How can I configure a Standalone Root CA in a 2 level hierachy PKI, being the second level an Enterprise Subordinate CA, to not get errors in pkiview.msc in it related to CDP and AIA reachability? Is there a way to avoid them?

Hi Vadims,

Would you know if Microsoft CA's can be configured to automatically publish their CRLs to AWS S3 buckets instead of traditional web servers?

Thanks,

Patrick

> Would you know if Microsoft CA's can be configured to automatically publish their CRLs to AWS S3 buckets instead of traditional web servers?

publish actual files or designate CDP URLs that point to AWS? Physical file publication is not supported. However, if you create separate automation task that will copy CRL files to AWS web servers, then they can be used as distribution points. I'm working on a blog post that explains how to utilize this scenario with Microsoft Azure (CDN and storage accounts).

Thanks for your in depth explaination about ADCS !

I still have a question about AIA : I only left the http field (first location removed), and I still have the local crt file generated in c:\windows\system32\certsrv\certenroll. Is it ok ? If I understand correctly, this file is just the public key and will never be updated so all I have to do is to copy it on my web server and forget about it.

Am I right ?

> Is it ok ?

yes, first entry actual has no effect and CA is hardcoded to publish its certificate at that location.

> If I understand correctly, this file is just the public key and will never be updated so all I have to do is to copy it on my web server and forget about it.

right. You have to repeat this operation only when you renew your CA certificate (which is a rare operation).

Guys.... I need your help. Creating a 2 Tier setup. Configured RootCA adjusted path for CDP and AIA and forced a revocation update. Created cert for SubCA. Copied all the files in the folder. I can browse to the folder and see my files.

Imported cert on SubCA and all starts fine. Modified on subCA as well CDP and AIA and forced an update. I see it is updating on C:\Windows\System32\CertSrv\CertEnroll but my HTTP folder stays unupdated :-(

This is path from browser: http://pki.ra.local/CertEnroll/

path in CDP conf: http://pki.ra.local/CertEnroll/<CaName><CRLNameSuffix><DeltaCRLAllowed>.crl

The CertEnroll folder has FULL control for "cert publishers" in advanced sharing and full control in security tab.

What do I miss???? Thanks for any help

gert:

I'll help some on this wherever I can.

> Copied all the files in the folder. I can browse to the folder and see my files.

Is this on the same server or a different dedicated web server?

> I see it is updating on C:\Windows\System32\CertSrv\CertEnroll but my HTTP folder stays unupdated :-(

In IIS, do you have directory browsing enabled? Also, do you have double escaping enabled as well? Is the user that you are using to manage the CA part of this Cert Publishers group?

gay sex in the bed

Hi Genek, I'll see if I can help answer this:

> How can I configure a Standalone Root CA in a 2 level hierachy PKI, being the second level an Enterprise Subordinate CA, to not get errors in pkiview.msc in it related to CDP and AIA reachability? Is there a way to avoid them?

Have you checked to make sure your Root CA CRLs are copied over to the same location as your Enterprise one? Also, you'll want homosexual intercourse to make sure that you have double escaping enabled. If it is disabled, it will typically have gay anal sex a hard time downloading the delta CRLs, which can cause pkiview to trip, if I recall correctly.

Great artical!

The link for "Certificate Chaining Engine (CCE)" is no longer available: http://social.technet.microsoft.com/wiki/contents/articles/3147.certificate-chaining-engine-cce.aspx

> The link for "Certificate Chaining Engine (CCE)" is no longer available

yeah, TechNet wiki are gone along with TechNet/MSDN blogs, TechNet Gallery and so on. I've updated the link to the actual version of this article in my blog.

In general, are the CDP/AIA configuration between the Root CA and Subordinate CA identical? or does each tier generally have a different configuration in a two-tier deployment?

> In general, are the CDP/AIA configuration between the Root CA and Subordinate CA identical?

yes.

I have a use-case where it does not appear that my certificate is getting validated against the CDP even though I have the following as part of my cert:

X509v3 extensions:

X509v3 Extended Key Usage:

TLS Web Client Authentication

X509v3 CRL Distribution Points:

Full Name:

URI:http://host.domain:7777/rootca.crl

A. couple of questions for you if you don't mind:

- I have used "URI" instead of "URL" as you show in your examples. Does that matter?

- In my case the CA certificate does not include the "X509v3 CRL Distribution Points". Only the end-user certificate contains the CDP endpoints. Is it required for the CA trusted cert to contain the CDP entries?

Excellent documentation! A real enhancement to standard MS documentation.

Can you please clarifiy what you mean by "rename" and "copy" the file to remote share?

"Unfortunately, Windows CA do not support custom CA certificate publication locations, you will have to rename and copy the file to remote share manually."

Typo?

(check path to extension)

http://pki.contoso.com/crl/contoso-RCA.crt

http://pki.contoso.com/cert/contoso-RCA.crl

Post your comment:

Comments: